• 4 min read

LiteLLM Supply Chain Poisoning: A Full-Path

Analysis from Trivy Compromise to Zero-Click Malicious .pth Injection

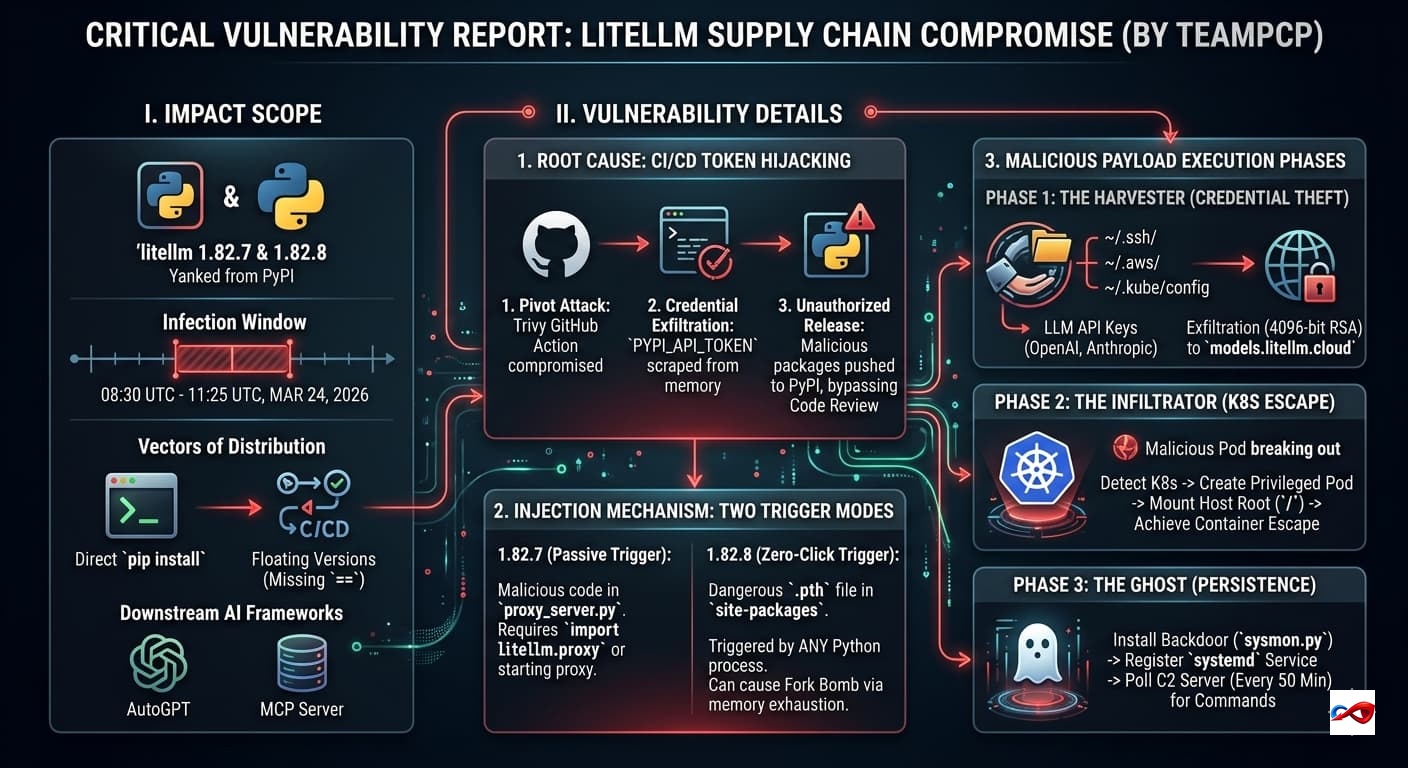

The popular AI framework LiteLLM has fallen victim to a severe supply chain poisoning attack. The threat actor, TeamPCP, compromised the upstream tool Trivy to steal publishing tokens, subsequently planting a malicious version in the PyPI repository equipped with fork bomb capabilities and container escape functionality.

- Release Date: March 24, 2026

- Risk Level: Critical

- Vulnerability ID: N/A

- Category: Supply Chain Poisoning

I. Impact Scope

- Affected Versions:

litellm1.82.7 and 1.82.8 (Currently yanked from PyPI). - Infection Window: Environments that performed installations or builds between 08:30 UTC and 11:25 UTC on March 24, 2026.

- Vectors of Distribution:

- Developer Terminals: Direct installation via

pip install. - CI/CD Pipelines: Automated workflows using floating versions (missing

==constraints). - Downstream AI Frameworks: Docker images or Agent frameworks (e.g., AutoGPT, MCP Server) dependent on LiteLLM.

- Developer Terminals: Direct installation via

II. Vulnerability Details (Technical Deep Dive)

The attack by TeamPCP highlights a sophisticated breach of the modern AI development ecosystem.

1. Root Cause: CI/CD Token Hijacking

The breach was not a code-level bug but a compromise of the DevOps toolchain:

- Pivot Attack: Attackers compromised the GitHub Action of Trivy, a security scanning tool.

- Credential Exfiltration: During LiteLLM's automated testing, the poisoned Trivy runtime scraped the

PYPI_API_TOKENfrom the process memory. - Unauthorized Release: Using this token, attackers bypassed Code Review hurdles and pushed malicious Wheel packages directly to PyPI. This explains why the GitHub source remained "clean" while the distributed binaries were "poisoned."

2. Injection Mechanism: Two Destructive Trigger Modes

- 1.82.7 (Passive Trigger): Malicious code was embedded in

litellm/proxy/proxy_server.py.- Trigger: Requires an explicit

import litellm.proxyor starting thelitellm --proxyservice to execute the Base64-encoded payload.

- Trigger: Requires an explicit

- 1.82.8 (Zero-Click Trigger - Extremely Dangerous): Utilized a

litellm_init.pthfile.- Mechanism: Python automatically executes any code containing

importstatements within.pthfiles found insite-packagesduring startup. - Impact: Merely installing the package weaponizes the environment. Any Python process (even

python --version) will trigger the payload. - Secondary Effect: A logic bug in the payload's subprocess handling causes an infinite recursive loop, resulting in a Fork Bomb that crashes the host via memory exhaustion.

- Mechanism: Python automatically executes any code containing

3. Malicious Payload Execution Phases

- Phase 1: The Harvester (Credential Theft)

- Scans for SSH keys (

~/.ssh/), Cloud credentials (~/.aws/), and Kubernetes configs (~/.kube/config). - Extracts LLM API Keys (OpenAI, Anthropic, etc.) from

.envfiles and environment variables. - Exfiltrates data via 4096-bit RSA encryption to

models.litellm.cloud.

- Scans for SSH keys (

- Phase 2: The Infiltrator (K8s Escape)

- Detects K8s environments and attempts to create a Privileged Pod (

node-setup-xxx) in thekube-systemnamespace. - Mounts the host root directory (

/) to achieve Container Escape, gaining full physical node control.

- Detects K8s environments and attempts to create a Privileged Pod (

- Phase 3: The Ghost (Persistence)

- Installs a backdoor at

~/.config/sysmon/sysmon.py. - Registers a

systemduser service (sysmon.service) masquerading as system telemetry. - Polls a C2 server every 50 minutes for remote Python command execution.

- Installs a backdoor at

III. Remediation Actions

1. Immediate Mitigation

- Force Uninstall & Rollback:

pip uninstall litellm -y

pip install litellm==1.82.6 - Clean Cache:

pip cache purge

rm -rf ~/.cache/pip

2. System Sanitization

- Remove Backdoors:

rm -rf ~/.config/sysmon/rm ~/.config/systemd/user/sysmon.service- Check

site-packagesforlitellm_init.pthand delete if found.

- Audit K8s: Check for unauthorized Pods starting with

node-setupor usingalpineimages in system namespaces.

3. Critical Credential Rotation

Assume all secrets are compromised. You must rotate:

- All LLM API Keys (OpenAI, Anthropic, Claude, etc.).

- Cloud Provider Access/Secret Keys.

- SSH Private Keys and Database Connection Strings.

AUTOSEC.DEV Solution: Building a 360-Degree Defense

- Secure Code Review: To defend against NPM supply chain poisoning, we combine automated static analysis with expert manual review to thoroughly assess your application's source code and third-party dependencies. We identify malicious packages, hidden backdoors, and logic errors introduced by attackers, eliminating security risks at the development stage before they compromise developer environments or production systems.

- Security Awareness Training & Phishing Simulation : FAMOUS CHOLLIMA heavily relies on social engineering—such as fake job interviews or fraudulent coding tasks—to trick developers into downloading poisoned NPM packages. We design realistic phishing campaigns and deliver role-based security training to measure and improve developer susceptibility, establishing a strong "human firewall" against targeted social engineering attacks.

- End-to-End Incident Response (IR): In an emergency, every second of confusion amplifies the loss. AUTOSEC.DEV provides standardized SOPs (Standard Operating Procedures) and rapid response services tailored to specific business needs to help projects mitigate losses quickly.